Attempting port 1.ġ6/01/04 13:49:40 ERROR SparkContext: Error initializing SparkContext. Type in expressions to have them evaluated.ġ6/01/04 13:49:40 WARN Utils: Service 'sparkDriver' could not bind on port 0. Using Scala version 2.10.5 (Java HotSpot(TM) 64-Bit Server VM, Java 1.7.0_79)

To adjust logging level use sc.setLogLevel("INFO") Using Spark's repl log4j profile: org/apache/spark/log4j-defaults-repl.properties Log4j:WARN Please initialize the log4j system properly. log4j:WARN No appenders could be found for logger (2.lib.MutableMetricsFactory). This is the second time I'm running Spark.

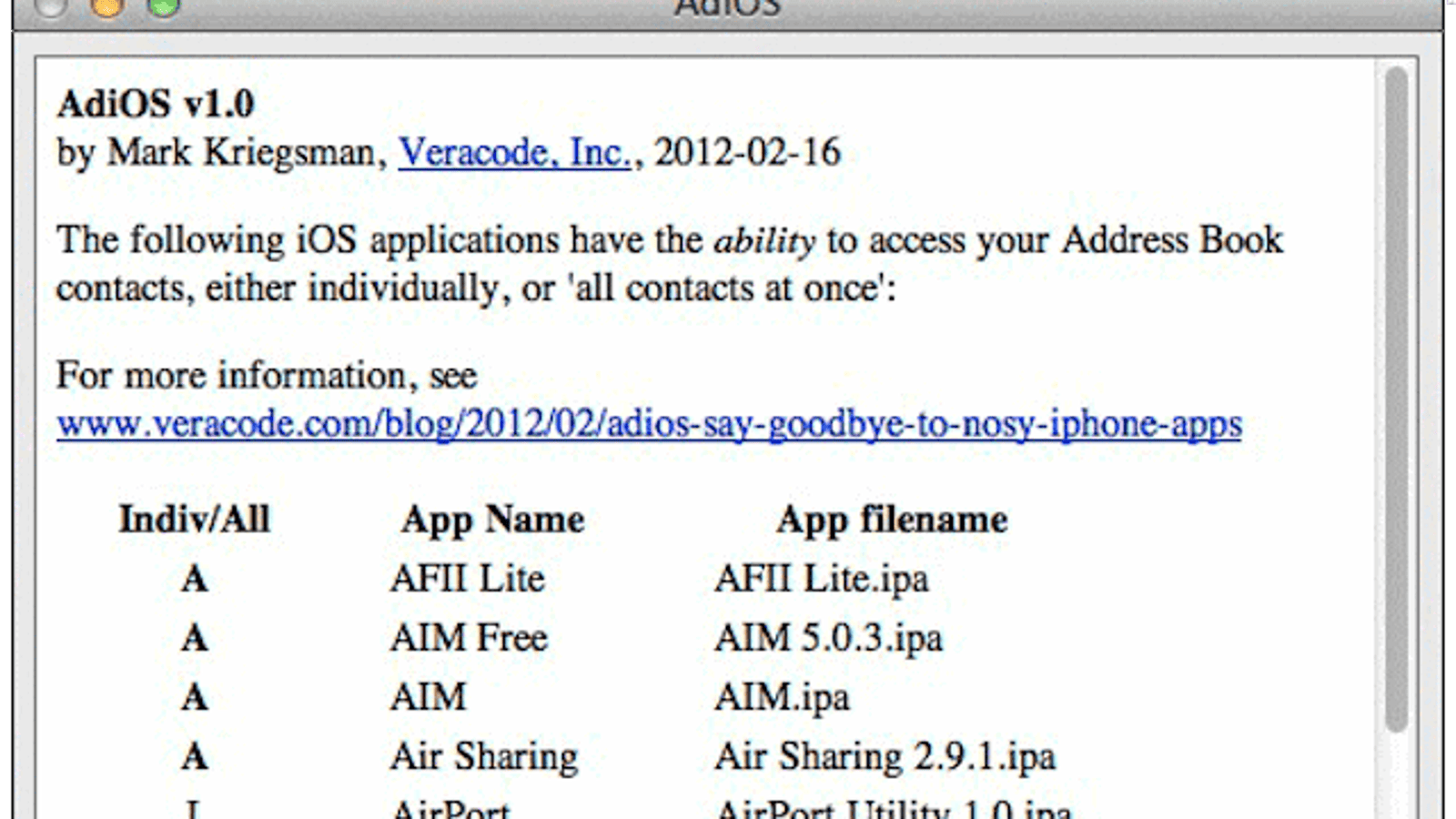

#Clear the spark for mac address book install

I also tried to install different versions of Spark but all have the same error.

#Clear the spark for mac address book mac os

I tried to start spark 1.6.0 (spark-1.6.0-bin-hadoop2.4) on Mac OS Yosemite 10.10.5 using "./bin/spark-shell".